Multitasking is one of the most important features offered by a real-time operating system. This feature allows multiple tasks to execute concurrently on a single system. Or at least it looks that way at first sight…

First impression

For the sake of an example let’s say that there is a garden watering supervisor with LCD, touch panel, Ethernet interface and logging. It has six tasks:

- measurements of weather conditions with external sensors,

- supervisor of sprinkler valves,

- graphical user interface with LCD and touch panel,

- network stack with a webserver,

- logging of data to persistent storage,

- calculating an answer to the ultimate question of life, the universe, and everything.

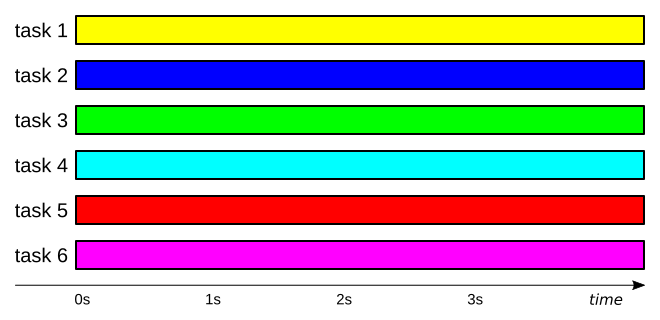

If we assume that a single second is the smallest observable unit of time for our naked eye – with no aid from a stopwatch, digital measuring equipment and so on – this system would appear to us like in the following image.

If we also assume that the portrayed system is running on a single-core hardware and that no voodoo is involved, we come to the unavoidable conclusion, that multitasking is just an illusion. To see through it, we just need to look close enough.

A closer look

So let’s zoom the graph above by just a few orders of magnitude.

As you see, at any moment of time our system is executing just a single task. Because the tasks are frequently switched, they appear to execute simultaneously. This illusion is made possible by one basic aspect of a typical system – the tasks need to interact either with other tasks or with external world. Such interactions usually involve waiting, because our system is ridiculously fast in comparison to the things it needs to interact with. Let’s follow with our example tasks.

- External sensors which can be used to measure weather conditions rarely allow data to be read with frequencies greater than a few kilohertz. But even that is too fast – there’s absolutely no point in measuring temperature, humidity, pressure, etc. with such frequencies – they just don’t change that often.

- External actuators are usually even slower than external sensors. From the moment the sprinkler valve starts opening till the moment it is actually fully open, our system can probably calculate a few million digits of π out of sheer boredom.

- Human user can generate just a few touch panel events per second and he/she won’t notice delays as long as a few dozen milliseconds – almost eternity from the perspective of our system.

- Networks usually have high throughput, but only on average – mainly due to data framing and high latency.

- Obvious things first – to log something you have to have it. This means that logging task has to wait for data, for example coming from the measurements task. Just as networks, persistent storage usually has high average throughput, but it is quite typical for such medium to require a short delay for each written block of data.

- Our system has 7 and a half million years to get this answer and actually everyone knows that its 42, so this task just pretends to do any useful work.

Whenever a tasks reaches a point of code which involves waiting, the kernel switches this task to another one which is ready to do something useful. This allows maximal utilisation of the system, as no time is wasted on busy-waiting for some event to happen.

World of technology is full of such illusions – it’s enough to mention displaying 25 still images per second to trick our perception into seeing smooth movement or placing red, green and blue LEDs so close to each other that they appear as a single white spot of light. It’s all just a matter of scale.

Real life multitasking

This scheme is very similar to the way we achieve multitasking in real life:

- while riding a bus you can read a newspaper,

- you can have a talk with your family during a meal,

- while driving a car you can listen to some music,

- you usually answer a private phone call while at work,

- break for commercials in the TV is a great moment to prepare hot beverage.

If you look at that from a greater distance you can honestly say that multiple activities occurred concurrently. But if you look closer, you’ll have to admit this is not entirely true…

- While actually entering or leaving the bus, buying a ticket or finding a place to sit/stand you did not read the newspaper .

- You did not talk while you were actually chewing your food.

- You don’t remember the exact lyrics of the song played while you were trying to overtake that truck on a busy road.

- Unless you work in a call center, you cannot really talk on the phone and do your work simultaneously.

- While you were in the kitchen you missed all those great inventions in the fields of dish detergents, nutritional supplements and refrigerators.

Benefits of multitasking

Unfortunately multitasking neither magically multiplies processing power of our system nor clones its cores. To be perfectly honest I must say that it actually incurs small overhead – usually much less than 1% – due to switching of tasks. So why bother? Where’s the benefit?

Decoupling of timing requirements

The most important (at least in my opinion) benefit of using multitasking is that it almost completely removes complex timing interdependencies from tasks. By assigning priority to each task, you decide how important it is in relation to other parts of the system. Task that is waiting for an event will start processing it as soon as possible, no matter what other tasks are doing at this moment – the only requirement is for this task to have sufficiently high priority. As timing management is handled by multitasking kernel, you can easily add, remove or modify tasks in your system, without worrying much about violating timing requirements of other tasks. This allows you to guarantee that the application will respond to an event within bounded time.

If our garden watering supervisor detects that humidity dropped below certain threshold, it will open sprinkler valves almost immediately. It won’t matter if the user is playing with the LCD at the moment, if the system is currently under a DDoS attack from the network or if logging task decided that it’s a perfect moment to compress the whole contents of the persistent storage. All other tasks don’t need to know anything about that event or how important it is, yet the multitasking system will be able to react within just a few microseconds. So you can rest assured that your garden will always be watered on time!

Maximisation of resource utilisation

By removal of all busy-waiting and polling, multitasking allows processing power of the system to be used to the fullest extent. This can bring significant improvements in areas such as hardware requirements, power consumption, throughput, responsiveness, number of supported features and more.

To make our garden watering supervisor responsive you don’t need to run it on an extremely fast chip, break every piece of code into very small chunks, move majority of your code into interrupt handlers and so on. You would probably have to do that with a super loop, event-driven or state machine architectures – to increase frequency of polling for flags and to keep response time within some reasonable bounds. Just use multitasking!

Code portability

Because there are no timing interdependencies between tasks, they are modular and encapsulated. They also use a defined set of standard communication and synchronisation mechanisms, like semaphores, mutexes, queues, signals, … Thanks to that they are very easy to reuse in different project which can run on different hardware with different system configuration.

You can easily copy any task from our example system into a project that requires some of its functionalities – be it logging, networking, graphical user interface or calculating an answer to the ultimate question of life, the universe, and everything. These new projects may have nothing to do with supervision, water or gardening, but multitasking allows each task to have minimal coupling with rest of the system. Generic and complete, such tasks can be considered to be standalone modules.

It is also worth noting that code portability can be viewed from the other side – integration of third-party modules (TCP/IP stacks, USB stacks, file systems, complex drivers, frameworks, etc.) with your application is much easier with a multitasking system than with any other typical embedded software architecture. In fact, many external libraries are written with an operating system in mind.

Who needs multitasking?

Unfortunately multitasking is not the silver bullet of software architecture. It has a few important advantages, but – as you probably imagine – it also has some drawbacks (for now let’s mention increased RAM usage and various problems related to resource sharing). In some applications multitasking will reduce the overall complexity, while in some others it may do exact opposite.

Generally speaking, if the application can be broken down into multiple loosely coupled pieces (like our example system) then using multitasking is probably a good idea. On the other hand, if the system performs one specific activity, then multitasking won’t have any significant advantage over other architectures.

In the end, it is always your choice…

Very good explanation